on

Where does your Node program spend its time?

Performance analysis is one of the most difficult challenges in building production software. If a slow application isn’t spending much time on CPU, it could be waiting on filesystem (disk) I/O, network traffic, garbage collection, or many other things. We built the Cloud Analytics tool to help administrators and developers quickly identify these sources of latency in production software. When the app is actually spending its time on CPU, the next step is figuring out what it’s doing: what functions it’s executing and who’s calling them.

One approach is to sample the program at say, 1000 times per second, and examine the 5-10 most frequently seen stacktraces. DTrace lets you do this with the profile provider, whose output might include something like this for a C program:

libc.so.1`mutex_trylock_adaptive+0x137 <- stacktrace

libc.so.1`mutex_lock_impl+0xdc

libc.so.1`mutex_lock+0x10

mysqld`_Z10open_tableP3THDP10TABLE_LISTP11st_mem_rootPbj+0x4b1

mysqld`_Z11open_tablesP3THDPP10TABLE_LISTPjj+0x205

mysqld`_Z20open_and_lock_tablesP3THDP10TABLE_LIST+0x1b

mysqld`_Z21mysql_execute_commandP3THD+0x416

mysqld`_Z11mysql_parseP3THDPKcjPS2_+0xf7

mysqld`_Z16dispatch_command19enum_server_commandP3THDPcj+0x6c9

mysqld`_Z10do_commandP3THD+0xca

mysqld`handle_one_connection+0x343

libc.so.1`_thr_setup+0x70

libc.so.1`_lwp_start

7 <- count

... (more stacktraces and associated counts)

Brendan has a great example of using this to find a 2000x win in GNU grep. Knowing that many samples shared a particular stacktrace like this one often points you right to the performance problem. But for dynamic environments like Python or Node.js, this information is very hard to obtain. Although the V8 VM supports profiling, it has to be enabled when you start the program, and this adds overhead to the entire execution of the program. That’s a non-starter for most production deployments. And although you can use DTrace to profile such programs, by default it sees only the native stack frames. In environments like Python, this corresponds to interpreter frames, which are fairly useless:

libpython2.6.so.1.0`PyObject_Free+0xe

libpython2.6.so.1.0`PyObject_Realloc+0xcb

libpython2.6.so.1.0`_PyString_Resize+0x7f

libpython2.6.so.1.0`get_line+0x333

libpython2.6.so.1.0`file_readline+0xa8

libpython2.6.so.1.0`PyCFunction_Call+0x63

libpython2.6.so.1.0`PyEval_EvalFrameEx+0x4099

libpython2.6.so.1.0`PyEval_EvalCodeEx+0x7d8

libpython2.6.so.1.0`PyEval_EvalCode+0x2f

libpython2.6.so.1.0`run_mod+0x41

libpython2.6.so.1.0`PyRun_FileExFlags+0x7e

libpython2.6.so.1.0`PyRun_SimpleFileExFlags+0x32c

libpython2.6.so.1.0`PyRun_AnyFileExFlags+0x69

libpython2.6.so.1.0`Py_Main+0xa70

python2.6`main+0x28

python2.6`_start+0x80

You can’t tell what Python-level functions are being called here. In Node.js things are even worse because the VM compiles JavaScript functions and invokes them directly on the heap, so you often don’t see any symbols at all:

0xfc618bc0

0xfc61bd62

0xfe870841

0xfc61c1f3

0xfc617685

0xfe870841

0xfc6154d7

0xfe870e1a

0xfe8700a6

node-dtrace`_ZN2v88internalL6InvokeEbNS0_6HandleINS0_10JSFunctionEEENS1_INS0_6ObjectEEEiPPPS4_Pb+0x1fc

node-dtrace`0x85e6d50

However, through a mechanism called a ustack helper, DTrace can be taught to understand V8 stack frames, giving you a stacktrace that looks more like this (colors and labels added for clarity):

<span style="color: #007700">libc.so.1`gettimeofday+0x7 <- libc gettimeofday call

Date at position <- JavaScript "Date" function

<< adaptor >>

<< constructor >> <- (invoked as a constructor)</span>

<span style="color: #000077">(anon) as exports.active at timers.js position 7590

(anon) as Socket._write at net.js position 21336

(anon) as Socket.write at net.js position 19714

<< adaptor >>

(anon) as OutgoingMessage._writeRaw at http.js position 21526

(anon) as OutgoingMessage._send at http.js position 20434

<< adaptor >>

(anon) as OutgoingMessage.end at http.js position 35062 <- re-entering node library

<< adaptor >></span>

<span style="color: #cc0000">(anon) as a at /home/dap/work-server.js line 25

handle at /home/dap/work-server.js line 13 <- invoking our request handler</span>

<span style="color: #000077">(anon) as EventEmitter.emit at events.js position 3532

<< adaptor >>

(anon) as parser.onIncoming at http.js position 80542

(anon) as parser.onHeadersComplete at http.js position 4904 <- parsing HTTP headers

<< internal >>

<< entry >> <- entering JavaScript</span>

node-dtrace`_ZN2v88internalL6InvokeEbNS0_6HandleINS0_10JSFunctionEEENS1_INS0_6ObjectEEEiPPPS4_Pb+0x1fc

node-dtrace`0x85e6d50

The helper is built into the “node” binary and translates each stack frame that it understands into a text description that typically includes the function name, the inferred name (for anonymous functions), and the file and position where the function is defined. Unrecognized stack frames are left alone, so you can see native C/C++ frames mixed with JavaScript frames. If line numbers are available, the position includes the line number where the function was defined; if not, only the character position in the file is printed. V8 only has line number information available for files that have previously been used in an exception stack trace.

Reading from the bottom up, we see the initial entry into JavaScript from node, then some frames in the node library for processing HTTP parser events, then the call into our program to handle the HTTP request, a call back to the node library to finish sending the response, then native code invoked by the Date constructor invoked as part of sending the response.

There are a few frames that you might be surprised to see if you’re not familiar with V8 internals:

entry: frame transitioning from C++ to JavaScriptexit: frame transitioning from JavaScript to C++construct: the above frame’s function is being invoked as a constructoradaptor: adaptor used when a function is invoked with fewer arguments than it expectsinternal: internal V8 frame (does not correspond to a JavaScript frame)

Now that we can have DTrace output JavaScript-friendly stacktraces, we can use the profile provider as described above to identify common stacktraces and thus find out where any Node program is spending its time.

Instructions

The DTrace ustack helper landed in the upcoming Node v0.6.7 and the tools for generating a flame graph are available on github. With this, you’ve got all the pieces to try this out on your own programs, though there are a few caveats to be aware of for now.

-

First, you must be running on an Illumos- (or Solaris-)based system. ustack helpers don’t work at all on Mac OS and appear to be broken on FreeBSD. If you don’t have such a system and want to play around, try a SmartOS live CD (it’s really easy).

-

You must also have access to modify kernel tunables, as the default limits are too low to support the V8 helper. (We’ll be rolling these tunable changes across the Joyent cloud so that SmartMachine customers can skip this step, but that hasn’t been completed yet.) Use MDB or /etc/system to set dtrace_helper_actions_max from 32 (the default) to at least 128:# echo "dtrace_helper_actions_max/W 0t128" | mdb -kw dtrace_helper_actions_max: 0x20 = 0x80(update: modern versions of SmartOS need no tuning so you can skip this step.)

-

Build Node with ustack helper support. Run

./configure --with-dtraceand you’ll get support for the ustack helper as well as additional DTrace probes. -

Run your program as normal. I’ll use this sample program:

/*

* This script demonstrates a simple stack trace involving an anonymous

* function, then goes into an infinite loop so you can more easily catch it

* with DTrace. One caveat: we grab a stack trace at the start to force V8 to

* compute line number information for this script. If we didn't have this,

* DTrace would print out position-in-file information instead of line numbers.

*/

new Error().stack;

function main() { func1(); }

function func1() { func2(); }

function func2()

{

(function () {

for (;;)

;

})();

}

main();

which I run like this:

$ node loop-anon.js &

[1] 1791

-

Now we’ll use this DTrace invocation to profile our program for 60 seconds and aggregate on stacktrace:

# dtrace -n 'profile-997/execname == "node" && arg1/ { @[jstack(40, 2000)] = count(); } tick-60s { exit(0); }' > out.stacks

The script automatically stops after 60 seconds. At this point, the output file is already pretty useful, showing JavaScript stacktraces sorted by frequency count. We can also use this file to generate flame graphs (below).

If you’re not familiar with DTrace, this invocation has a few important pieces:

profile-997: Samples on all CPUs at 997Hz. We use 997 instead of the more obvious 1000 to avoid oversampling periodic activity.execname == "node": the probe’s body only fires when the currently running program is “node” to avoid sampling other processes on the system. You could omit this and sample everything.arg1:arg1for profile probes is the current user-level instruction pointer, so testing against zero here ensures that we only fire the probe body for samples that interrupt userland code.@[jstack(40, 2000)] = count();: the body of the probe populates an aggregation that counts the number of times each userland stacktrace has been seen. We use jstack to collect the userland stack trace. We gather40frames and give the ustack helper2000bytes of buffer for translating the stack frames.tick-60s { exit(0); }: causes the DTrace invocation to exit after 60 seconds> out.stacks: saves the output to out.stacks rather than stdout.

If you run through these steps with the above example program, you should see all samples with the same or nearly identical stacktraces, since the program quickly enters a tight loop. For a more interesting example, I ran this on one of our Cloud Analytics services. From the output you can see that not all of the stacktraces are complete; see below for details on that.

Scaling up

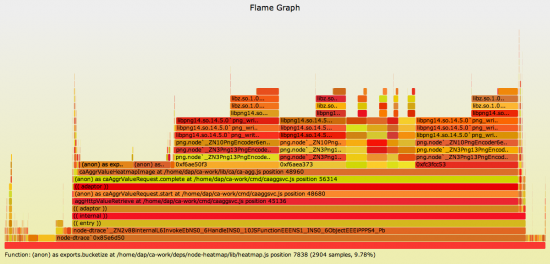

We believe this DTrace-based profiling support for Node.js will be a huge leap for understanding the performance of individual Node programs in production. We’re also exploring ways of scaling this up to many instances of the same program, or cases where there’s no single hot stacktrace but rather one long-running function that calls many different functions during its execution. For such cases, we’ve been looking at flame graphs to visualize overlapping stacktraces. Here’s a flame graph based on the above output that shows where the Cloud Analytics aggregator (itself a Node.js program) spends its time:

Try clicking that graph for an interactive SVG where you can inspect individual frames.

From here we can see that most time is spent generating heatmaps (not surprisingly, if you saw our OSCON or Surge presentations that talk about our architecture). We can also tell how much time is spent bucketizing the data in preparation for rendering the heatmap vs. generating the PNG image itself.

You can easily generate flame graphs for your own programs. Collect data as shown above, then use the stackcollapse and flamegraph tools to generate the graph.

Conclusions

With the V8 ustack helper, you can profile a running Node program to see what functions it’s spending time in. Being DTrace-based, you can switch this on and off at will and do it safely in production with literally zero overhead when not enabled and minimal overhead when enabled. (There are also ustack helpers for Python and Java.)

There are still some cases that the ustack helper doesn’t handle well that lead to some artifacts in the output. For example, in the above aggregator flame graph, three of the frames called from caAggrValueHeatmapImage are actually the same function, but because the helper doesn’t resolve them, they appear as distinct hex addresses and so show up as three silos instead of one.

Another artifact appears to result from the fact that internal V8 stack frames don’t use frame pointers. This is a common optimization that destroys observability because you can’t collect a stacktrace without frame pointers. More investigation is needed to determine whether this is actually the problem and whether it would be a reasonable tradeoff to disable this optimization.

Nevertheless, for us this tool has been a floodlight in an otherwise pitch-black room. It’s unfortunate that ustack helpers don’t work on Mac OS or FreeBSD, but perhaps with the increased attention on performance analysis in dynamic environments like Node, those systems will address the underlying issues. At Joyent, we’ll be updating both the kernel and the node binaries that we ship to fully support DTrace-based profiling out of the box, and we’re exploring ways of incorporating this data into Cloud Analytics.